Ethics of AI in Healthcare: Managing Risk, Compliance, and Trust

AI-related issues in healthcare rarely surface immediately. They often emerge later as denied claims, audit findings, or compliance questions tied to automated coding and documentation decisions. In today’s revenue cycle environment, nearly 41% of providers report that at least one in every ten claims is denied, and denial volumes continue to climb year over year. When denials rise, revenue, cash flow, and operational stability are all put at risk.

This is why the ethics of AI in healthcare is no longer a theoretical concern. For revenue cycle leaders, coding managers, and healthcare IT teams, ethical design directly affects claim accuracy, audit readiness, regulatory exposure, and organizational trust.

This article examines the ethics of AI in healthcare through a practical, operational lens, with a focus on medical coding automation and revenue cycle workflows. The goal is to understand how AI can be deployed responsibly to improve efficiency while maintaining transparency, accountability, and compliance at scale.

TL;DR

- AI ethics is now an operational issue. In medical coding and RCM, ethical gaps show up as denied claims, audit findings, and compliance risk.

- AI influences reimbursement at scale, so a lack of explainability, biased data, or weak governance can affect thousands of claims before issues are detected.

- Ethical healthcare AI rests on core principles: accuracy, fairness, transparency, human oversight, and alignment with regulatory standards like HIPAA and CMS.

- The biggest risks in AI-driven coding include algorithmic bias, black-box decisions, PHI governance failures, and unclear accountability for errors.

- Responsible AI requires built-in safeguards, such as explainable outputs, audit trails, continuous monitoring, and human-in-the-loop workflows to keep automation compliant and defensible.

Table of Contents

- The Role of AI in Healthcare and Why Ethics Matter

- 5 Core Ethical Principles for AI in Healthcare

- Organizational Challenges to Ethical AI Integration

- Key Ethical Risks in Automated Medical Coding and RCM

- Operational Safeguards Ethical AI Requires

- Ethics and Trust in AI-Driven Operations

- Putting Ethical AI into Practice with RapidClaims

- Conclusion

- FAQs

The Role of AI in Healthcare and Why Ethics Matter

AI is now embedded across healthcare, supporting everything from diagnostics and patient monitoring to administrative and revenue cycle workflows. What began as targeted experimentation is now influencing decisions that directly affect reimbursement, audits, and regulatory compliance.

As adoption expands, AI’s role is increasingly operational. In medical coding, documentation review, risk adjustment, and claims management, AI systems shape how revenue is captured and defended. The financial stakes are significant. In fiscal year 2023, CMS estimated that Medicare Fee-for-Service improper payments reached approximately $31.2 billion, with a large share tied to documentation and coding deficiencies.

This is where ethics becomes practical rather than theoretical. When AI systems recommend codes, flag documentation gaps, or influence risk scores, gaps in governance translate into real operational risk.

Key ethical failure points include:

- Limited explainability: Models that cannot clearly justify outputs make audits and payer reviews harder to defend.

- Biased training data: Skewed datasets can lead to systematic undercoding or overcoding across populations, providers, or payer types.

- Weak data controls: Poor governance increases exposure to privacy violations and regulatory penalties.

Unlike manual processes, AI operates at scale. A single design flaw can affect thousands of claims before it is detected. For revenue cycle, coding, and compliance teams, ethical AI directly impacts:

- Claim accuracy and denial rates

- Audit readiness and documentation defensibility

- Compliance with payer and federal requirements

- Trust across coding, compliance, and leadership teams

In healthcare operations, ethics is fundamentally about control and accountability as automation expands. Technical performance alone is not sufficient. Clear ethical standards are required to ensure AI systems remain accurate, fair, explainable, and compliant at scale.

Also Read: AI-Powered Automation in Medical Coding

5 Core Ethical Principles for AI in Healthcare

Ethical AI in healthcare is grounded in established biomedical ethics, but its value comes from how those principles are applied in real, regulated workflows. For revenue cycle, coding, and compliance teams, ethics is not theoretical. It determines how AI systems are designed, governed, and trusted in production.

1. Beneficence and Non-Maleficence

AI systems should improve outcomes without introducing harm. In medical coding and billing, this means increasing accuracy, reducing omissions, and preventing denials that delay reimbursement or create patient confusion.

An AI system that boosts throughput but introduces systematic errors or compliance gaps fails this principle, regardless of short-term efficiency gains. Continuous validation against payer rules, specialty guidelines, and audit findings is essential.

2. Justice and Fairness

AI trained on incomplete or biased data can produce uneven outcomes across patient populations, provider groups, or payer types. In revenue cycle workflows, this may appear as inconsistent HCC capture, undercoding for certain demographics, or variable reimbursement results.

Ethical AI requires ongoing monitoring, which includes regular audits, performance reviews, and bias assessments—to ensure consistent performance across clinical, geographic, and socioeconomic variables.

3. Accountability and Human Oversight

Automation does not transfer responsibility; healthcare organizations remain accountable because they oversee and approve AI-generated claims and must ensure compliance with regulations.

Ethical AI must preserve human control by giving coding managers and compliance leaders visibility into AI decisions and the ability to intervene when needed. Human-in-the-loop workflows are a requirement in regulated environments.

4. Transparency and Explainability

Ethical AI systems must support explainable decision-making. Organizations need to understand how codes were assigned or suggested, particularly during audits, payer reviews, or regulatory inquiries. Explainability enables defensibility and ensures that AI strengthens compliance rather than obscuring it.

5. Regulatory Alignment

Ethical AI cannot operate outside regulatory constraints. HIPAA, CMS guidance, ICD-10, CPT, and HCC requirements form the baseline for acceptable use. AI systems supporting healthcare documentation must be designed to operate within these frameworks, not around them.

These principles only matter when they are embedded into system design and daily workflows. Ethical healthcare AI is defined by how reliably it supports accurate, explainable, and compliant decision-making at scale.

Organizational Challenges to Ethical AI Integration

While these ethical principles are widely understood, many healthcare organizations struggle to apply them consistently once AI systems move into production.

Common challenges include:

- Lack of leadership, ownership, and clear direction

- Incomplete or poorly enforced AI governance policies

- Insufficient training across clinical and administrative teams

- Gaps in technical and operational expertise

Ethical AI requires coordination across leadership, IT, data teams, clinicians, and compliance functions. Weakness in any of these areas can undermine safeguards and expose organizations to compliance and financial risk.

Ethical healthcare AI is not defined by intent or policy statements alone. It is defined by how consistently ethical principles are embedded into system design, governance structures, training programs, and daily workflows.

Organizations that treat ethics as an operational requirement, supported by clear policies, human oversight, transparency, and continuous assessment, are better positioned to benefit from AI while maintaining trust, protecting patients, and meeting regulatory expectations.

Key Ethical Risks in Automated Medical Coding and RCM

AI-driven coding and revenue cycle automation can improve speed and consistency, but they also introduce risks that must be managed deliberately. In healthcare, these risks show up as compliance exposure, audit pressure, and financial impact.

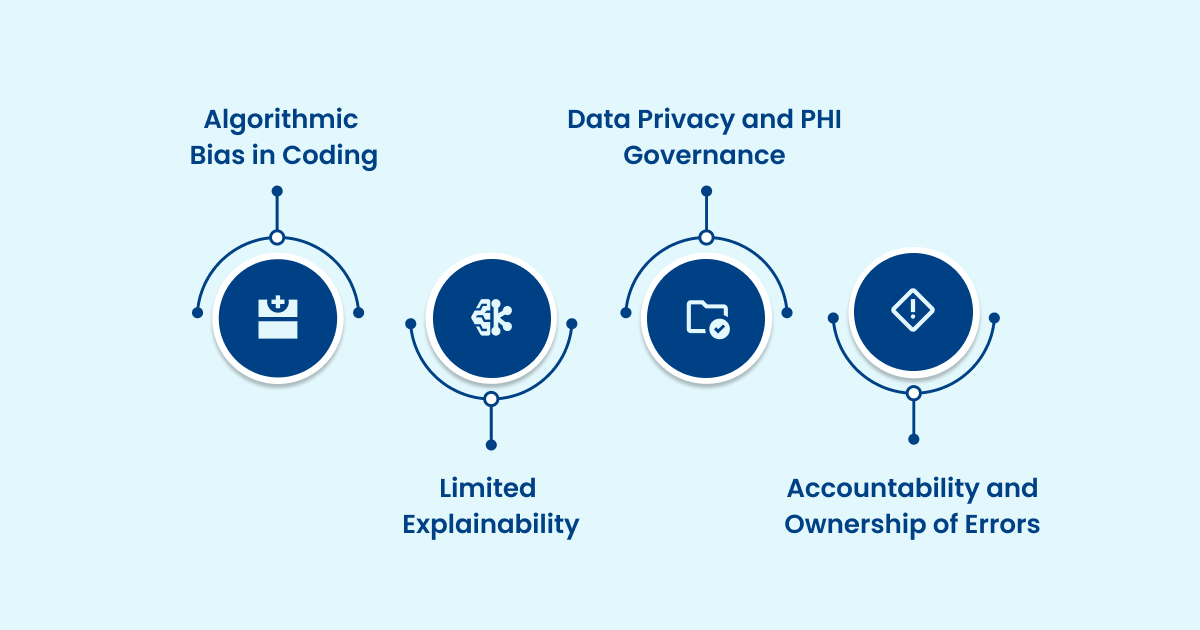

1. Algorithmic Bias in Coding and Risk Adjustment

AI models learn from historical data, so if that data contains disparities or uneven coverage, the AI may systematically favor or disadvantage certain patient groups or providers, leading to biased coding and reimbursement outcomes.

Common impacts include:

- Undercoding or overcoding for certain patient populations

- Inconsistent HCC or RAF score capture across providers

- Uneven denial exposure by payer, region, or specialty

- Audit findings that emerge long after errors are introduced

Because bias often develops gradually, continuous monitoring is essential.

2. Limited Explainability and Audit Readiness

Many AI systems generate outputs without clearly showing how decisions were made. In medical coding, this creates immediate compliance challenges.

RCM teams must be able to explain:

- Why was a specific ICD, CPT, or HCC code suggested

- Which documentation supported the recommendation

- Whether CMS and payer guidelines were applied correctly

Without explainability, defending AI-assisted decisions during audits or appeals becomes slow, difficult, and risky.

3. Data Privacy and PHI Governance

Automated coding systems integrate directly with EHRs and claims platforms, increasing the stakes around data handling and access.

Key risk areas include:

- Unauthorized or unclear secondary use of PHI

- Weak role-based access controls

- Unclear data retention, reuse, or deletion policies

- Limited traceability across training and inference

HIPAA compliance is a baseline. Ethical AI also requires strict data minimization and end-to-end visibility into how data is used.

4. Accountability and Ownership of Errors

Automation does not remove responsibility. Healthcare organizations remain accountable for every submitted claim.

Ethical gaps arise when:

- Human review requirements are not clearly defined

- Ownership of AI-generated errors is unclear

- Remediation timelines for systemic issues are missing

- Vendor and provider responsibilities overlap

Clear accountability structures are critical to prevent automation from increasing risk rather than reducing it.

Operational Safeguards Ethical AI Require

Identifying ethical risks is only the first step. Preventing them requires translating ethical expectations into concrete operational controls.

Effective safeguards include:

- Transparent documentation of training data sources and update processes

- Code-level explainability linked directly to source documentation

- Immutable audit trails capturing AI outputs and human actions

- Ongoing accuracy and fairness monitoring by the payer and specialty

- Strong security controls and third-party risk management

AI operates across thousands of claims simultaneously. When issues go unnoticed, their impact multiplies quickly. Ethical safeguards keep automation observable, defensible, and controllable. For RCM leaders, ethics is not separate from performance. It is the foundation that makes large-scale automation sustainable.

Also Read: Essential Guide to Healthcare Data Compliance & Protection

How RapidClaims Helps

Ethical AI in revenue cycle management only works when it is built into everyday workflows, not treated as a separate layer of governance. This is where platforms designed specifically for healthcare operations matter.

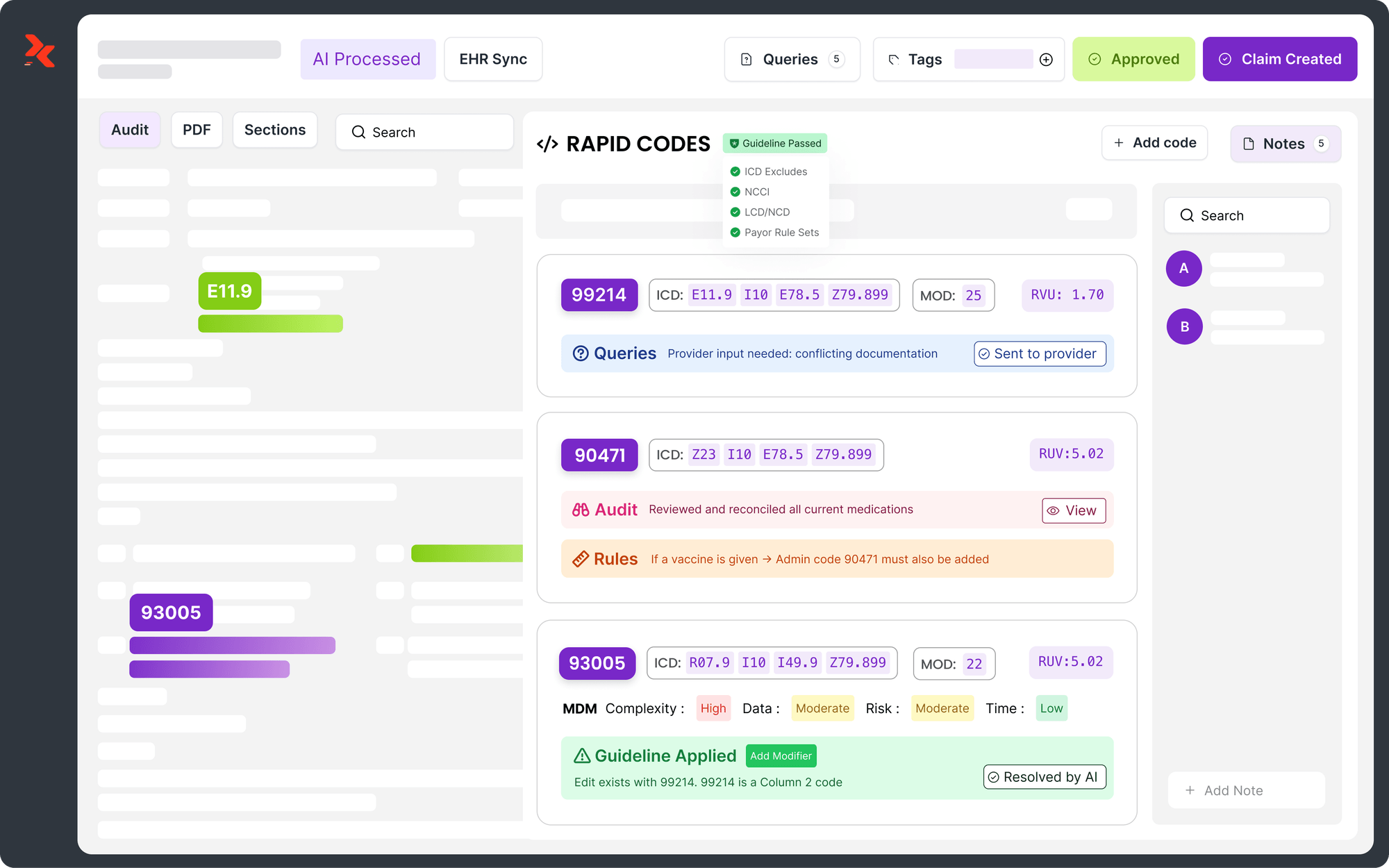

RapidClaims is an enterprise-grade, AI-powered RCM platform built with transparency, audit readiness, and compliance at its core. Its approach to automation emphasizes explainability, human oversight, and clear accountability across coding, documentation, and claims workflows.

RapidClaims supports responsible AI adoption by:

- RapidCode: AI-driven medical coding that processes up to 1,000 charts per minute with 96% accuracy, improving productivity by up to 170%.

- RapidCDI: Documentation intelligence that improves HCC capture by 24%, increases appropriate E&M levels by 18%, and delivers measurable revenue lift.

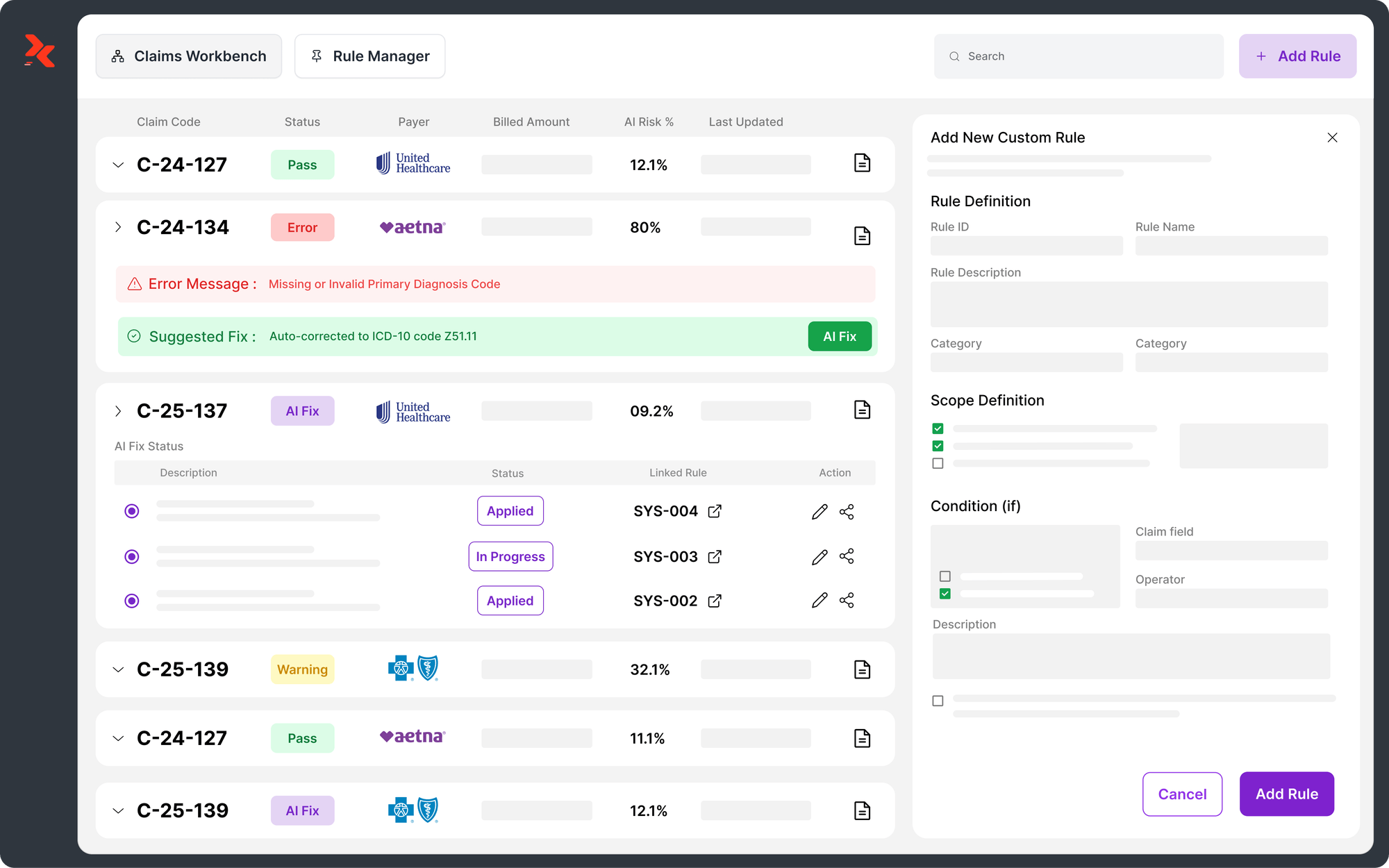

- RapidScrub: Proactive claim scrubbing that identifies issues before submission, reducing denials by 40% and shortening A/R recovery by five days.

- RapidRules Engine: Real-time application of complex payer rules to maintain compliance and optimize reimbursement as guidelines change.

- RapidAgents: AI assistants that support coding, monitoring, and appeals, saving teams more than two hours per day per user.

- Eligibility Verification and Prior Authorization: Streamlines scheduling and approvals, achieving 98% first-pass authorization success.

By aligning automation with ethical and regulatory expectations, RapidClaims demonstrates how AI can improve accuracy, reduce denials, and scale revenue cycle operations without introducing hidden risk.

Conclusion

AI can improve speed and accuracy across revenue cycle operations, but without ethical guardrails, it also increases risk. For medical coding and RCM leaders, ethical AI comes down to transparency, accountability, and compliance at scale.

Manual workflows are struggling to keep pace with rising chart volumes and regulatory complexity. AI offers real relief only when systems are explainable, auditable, and supported by clear human oversight. The ethics of AI in healthcare is what makes automation reliable, defensible, and sustainable.

Platforms built for healthcare operations enable organizations to scale AI responsibly by reinforcing human expertise. When ethics is embedded into daily workflows, AI becomes a sustainable driver of accuracy, fewer denials, and regulatory trust. Contact us to learn how RapidClaims supports ethical, audit-ready AI across coding and revenue cycle workflows.

FAQs

1. What makes AI ethical in medical coding?

Ethical AI in coding is defined by transparency, accountability, and compliance. Systems must provide explainable outputs, preserve human oversight, and maintain audit-ready documentation while reducing errors and denials.

2. Does AI replace human coders?

No. AI shifts coders toward review, validation, and exception management. Human expertise remains essential for complex, high-risk, or ambiguous cases.

3. Why is explainability important for AI-assisted coding?

Explainability allows coders, auditors, and compliance teams to understand why a code was suggested and which documentation supports it. This is critical for audits, appeals, and payer reviews.

4. Can AI increase audit or compliance risk?

Yes, if poorly governed. Ethical AI reduces risk by providing traceable decisions, consistent logic, and clear documentation aligned with CMS and payer guidelines.

5. Is human review always required for AI-generated code?

Not always. Many organizations use a risk-based approach, requiring human sign-off for high-impact, regulatory-sensitive, or ambiguous codes while allowing lighter review for routine cases.

6. Who is accountable if an AI-generated code is challenged?

The provider or billing organization remains fully accountable. This is why transparency, audit trails, and defined oversight processes are essential.

.png)

Mary Degapogu

Mary Degapogu is a proficient medical coder with 6 years of experience in E/M Outpatient and ED Profee coding, focused on precise code assignment and documentation compliance to drive clean claims and revenue integrity at RapidClaims.

Latest Post

expert insights with our carefully curated weekly updates

Related Post

Top Products

%201.png)

.jpg)

.jpg)