How AI Is Improving Ambient Clinical Documentation Accuracy in 2026

Physicians spend nearly one-third of their working day on documentation. Yet despite EHRs, voice tools, and years of workflow optimization, inaccurate and incomplete clinical notes remain one of the most persistent and costly problems in healthcare.

Ambient intelligence entered the picture with a compelling promise. Let the technology listen, transcribe, and draft notes while the clinician focuses on the patient. Adoption has followed quickly. But speed and accuracy are not the same thing. Reducing documentation time means nothing if the notes produced are incomplete, miscoded, or missing critical patient context.

So the real question isn't whether ambient AI saves time. It's whether it actually improves ambient clinical documentation accuracy and under what conditions. That's exactly what this blog answers.

At a Glance

- AI is improving ambient clinical documentation accuracy by capturing context. It uses NLP, speaker attribution, structured note output, HCC capture, and real-time coding logic to reduce documentation and billing errors.

- But ambient-only systems have limits. They miss historical context, struggle in nuanced specialties, can hallucinate details, and often fail without structured EHR integration.

- Accuracy improves significantly with proper integration. Specialty-specific models, structured data mapping, FHIR/SNOMED alignment, and field-level EHR embedding are critical best practices.

- RapidClaims closes the revenue accuracy gap. It strengthens coding, CDI, claim scrubbing, and denial recovery, turning documentation into clean, compliant, reimbursable claims.

Table of Contents

- The Documentation Accuracy Crisis in Healthcare

- What Is Ambient Clinical Documentation?

- 8 Ways AI Improves Ambient Clinical Documentation Accuracy

- 7 Best Practices to Implement AI For Better Ambient Clinical Documentation Accuracy

- How RapidClaims Bridges the Accuracy Gap

- Conclusion

- FAQs

The Documentation Accuracy Crisis in Healthcare

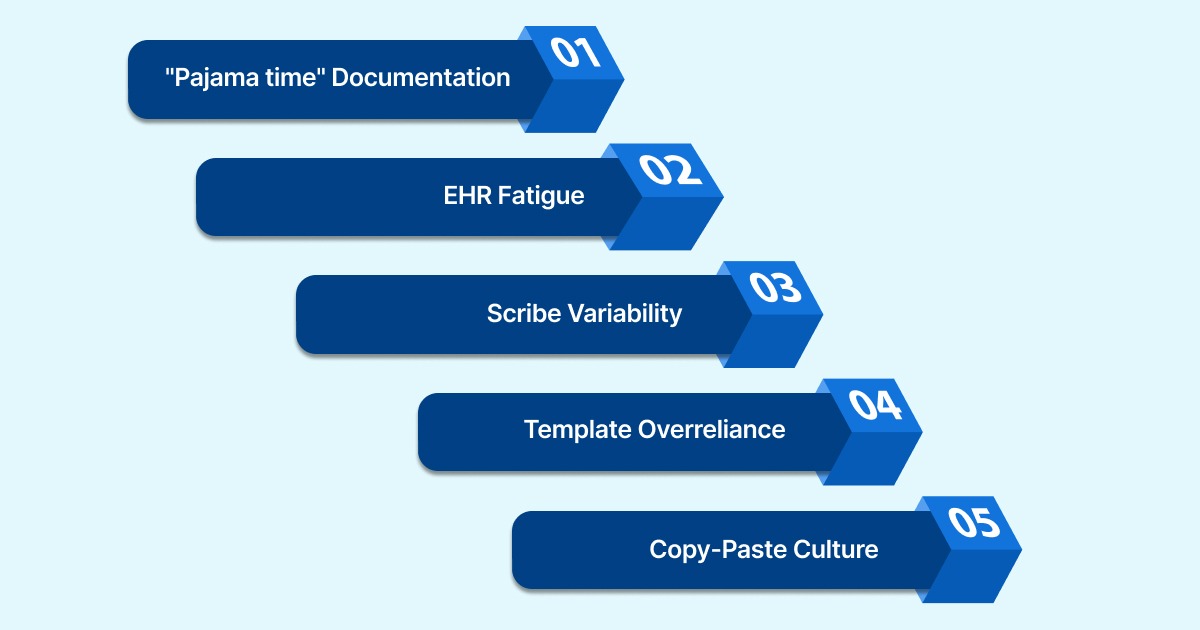

The problem isn't that clinicians don't document. It's that the conditions under which they document make accuracy nearly impossible to sustain. Consider what drives errors in clinical notes today:

- "Pajama time" Documentation: When physicians delay charting until after clinic hours, details blur. A visit documented at 11 PM lacks the nuance of one that happened at 9 AM. Fatigue compresses notes, omits context, and collapses complexity into vague summaries.

- EHR Fatigue: Digital records didn't fix the accuracy problem. They added interface friction to it. Clicking through templates, navigating dropdowns, and reconciling auto-populated fields creates its own kind of error from speed-clicking past what matters.

- Scribe Variability: Human scribes reduce physician typing but introduce inconsistency. Two scribes documenting the same encounter produce two different notes. Training, attention, and medical literacy all vary.

- Template Overreliance: Specialty templates are designed for the average encounter, not the actual one. Nuanced findings, offhand patient disclosures, or atypical presentations get squeezed into fields that don't fit, or left out entirely.

- Copy-Paste Culture: Perhaps this is the most dangerous habit in modern EHR use. A problem list was carried forward from last quarter with no updates. Medications the patient stopped taking two years ago are still in the record. Copy-paste propagates errors across encounters without anyone catching them.

The downstream effects are serious. Incorrect billing codes, denied claims, reduced reimbursements, failed audits, and care decisions made on incomplete information. Speed alone doesn't fix any of this. That's why ambient clinical documentation accuracy must be the benchmark, not just throughput.

What Is Ambient Clinical Documentation?

Ambient Clinical Documentation (ACD) is an AI-powered technology that listens to the natural conversation between a clinician and patient, identifies clinically relevant information, and automatically generates a structured note.

- It does not require the physician to type, dictate, or interrupt the encounter.

- The system uses speech-to-text to transcribe the conversation, then applies NLP to extract clinical meaning, symptoms, diagnoses, medications, and treatment plans.

- It then organizes that information into standard formats like SOAP notes or H&P structures.

The draft is then available for physician review before sign-off.

How Ambient AI Differs from Traditional Voice Dictation

Ambient AI and traditional voice dictation both aim to simplify clinical documentation. But they work very differently. Here’s a clear comparison:

Ambient Clinical Documentation Benefits

The real value of ambient clinical documentation lies in operational, financial, and clinical impact.

Here are the key benefits:

- Physicians stay present. No switching between patients and screens mid-conversation.

- Notes are drafted by the end of the visit, cutting after-hours charting significantly.

- Documentation is more consistent because the AI captures details in real time, not from memory 30 minutes later.

- Patients get more focused attention. The visit isn't interrupted by typing.

- Reduced cognitive load means physicians end the day with less fatigue, which also reduces careless errors in late-day notes.

- Faster chart closure supports same-day billing, which tightens the revenue cycle.

That said, the benefits only hold if the underlying documentation is accurate. Generating notes faster doesn't help if those notes are incomplete, miscoded, or missing critical context. So how exactly does AI address the accuracy problem?

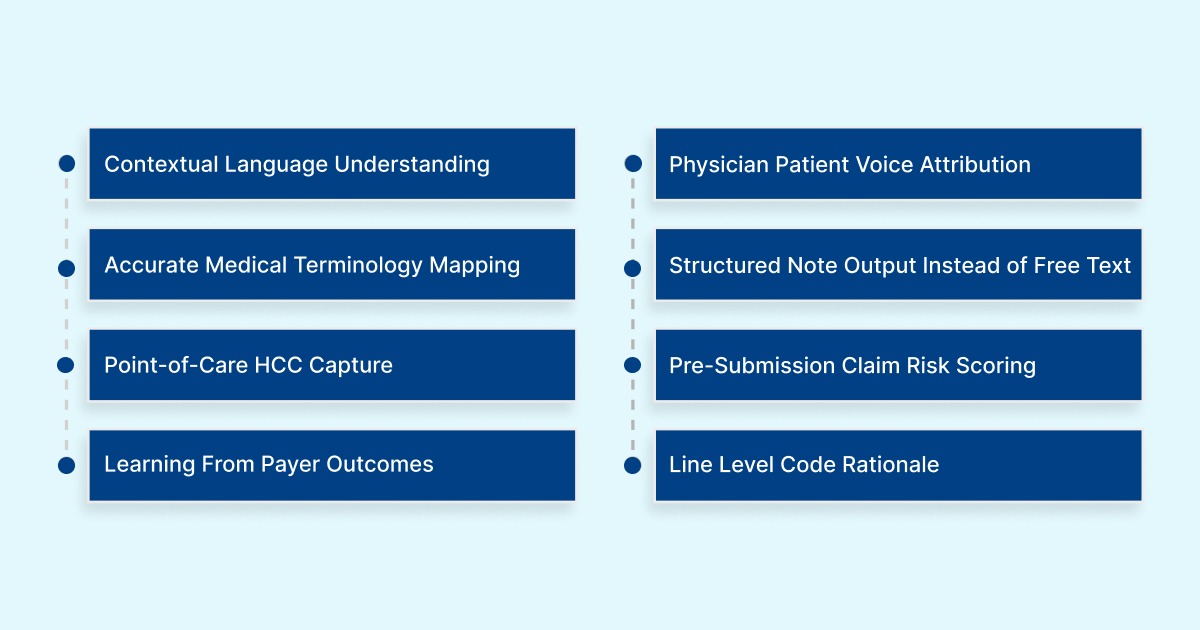

8 Ways AI Improves Ambient Clinical Documentation Accuracy

AI doesn't just make documentation faster. It changes the conditions under which errors occur in the first place. Here's where it makes a measurable difference:

1. Contextual Language Understanding, Not Just Transcription

Unlike basic speech-to-text, NLP-powered ambient systems interpret meaning from context. If a patient says, 'knee pain from last year is back,' the system understands this as a recurring condition, not a new complaint.

That distinction affects coding, diagnosis documentation, and continuity of care records. Without contextual understanding, even perfectly transcribed notes can contain clinically inaccurate information.

2. Voice Attribution Between Physician And Patient

Accurate documentation depends on correctly attributing what was said by whom. Speaker diarization separates the two voices throughout the recording and assigns each statement to the correct speaker.

This ensures that what the patient reported stays in the subjective section of the note and what the physician determined ends up in the assessment. This is exactly where downstream billing and coding systems expect to find it.

3. Accurate Medical Terminology Mapping

Clinical language is precise, and errors in terminology carry real consequences. A misspelled drug name, an incorrect anatomical reference, or a nonstandard abbreviation can cause a code to be assigned incorrectly.

Ambient clinical documentation accuracy systems trained on medical corpora recognize thousands of clinical terms, map them to standardized codes (ICD-10, CPT, SNOMED CT), and apply the correct spelling and specificity automatically. This removes a significant source of variability that comes from different humans transcribing the same clinical language differently.

4. Structured Note Output Instead of Free Text

Rather than producing a wall of free text, AI doesn't just produce a paragraph of text. It organizes captured information into standard formats like SOAP or H&P.

When the assessment section contains actual diagnoses and the plan section contains specific orders, downstream processes, including coding and claim submission, can run accurately and automatically.

5. HCC And Risk Score Capture At The Point of Care

In value-based care contracts and Medicare Advantage plans, reimbursement is tied to how completely a patient's conditions are documented. If those conditions aren't captured with the right specificity in each encounter note, the RAF score drops, and the practice gets underpaid.

Platforms like RapidCDI™ surface HCC suspicions and RAF delta calculations before the note is signed, so physicians can confirm or add diagnoses while the encounter is still fresh. This is where ambient clinical documentation accuracy directly connects to revenue.

6. Pre-Submission Claim Risk Scoring

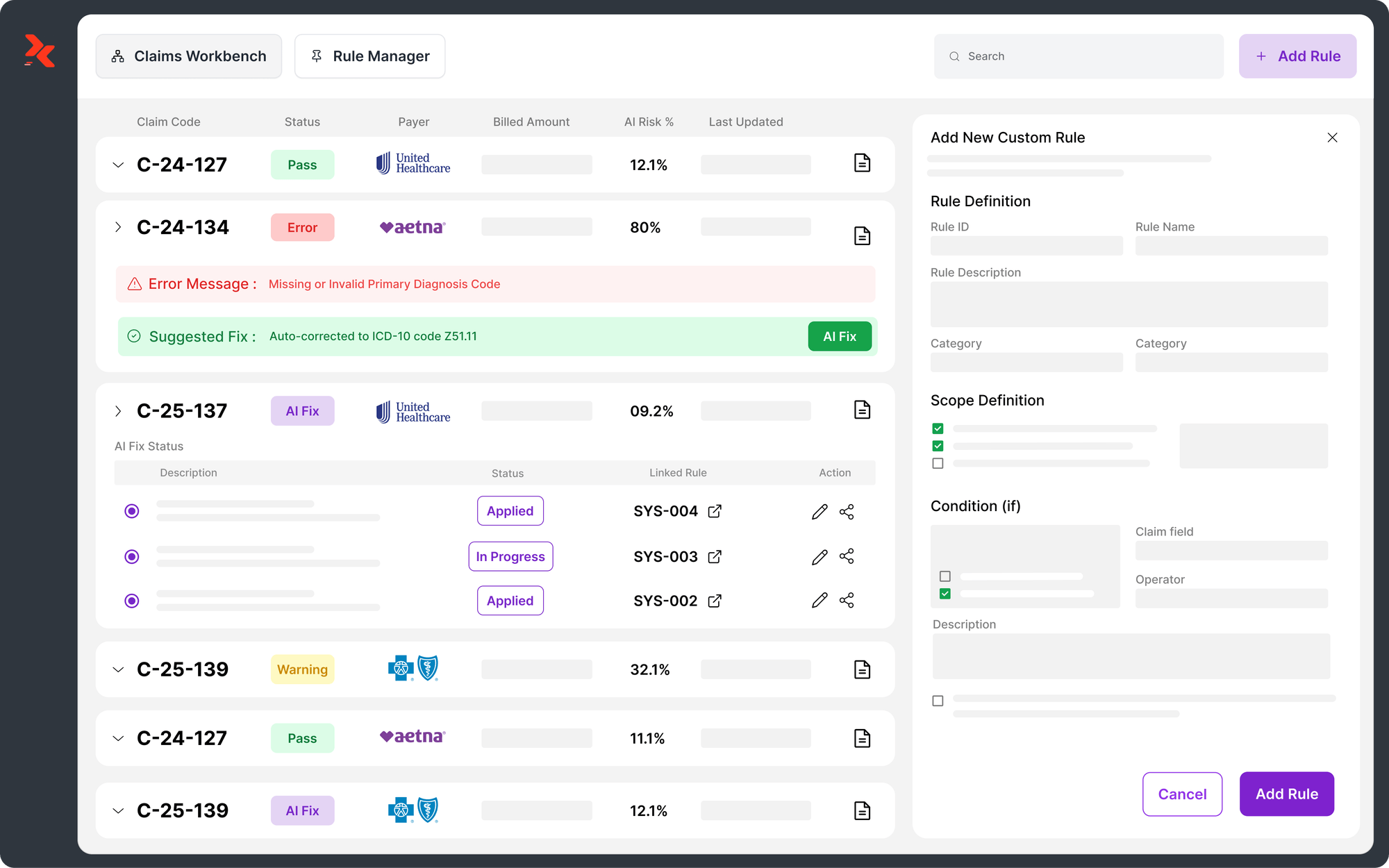

Not every claim has the same risk of denial. Some have coding combinations that payers routinely reject. But some AI platforms analyze claims against payer-specific rules before submission and assign a risk score to each claim.

High-risk claims are flagged for human review while low-risk claims move forward automatically. This creates a tiered accuracy system where human attention is allocated where it actually matters.

7. Learning From Real Payer Outcomes Over Time

Static rules-based systems don't evolve. Payer policies change constantly, new edits, new LCD updates, new denial patterns. AI systems that retrain on actual payer responses (accepted claims, denials, appeal outcomes) stay current without manual updates.

For example, RapidClaims retrain weekly on payer-specific feedback, which means the system gets more accurate over time rather than gradually falling behind. Static rules-based tools can't do this. They require manual intervention every time a payer changes its logic.

8. Line-Level Rationale For Every Code Assigned

Accuracy means nothing without accountability. AI systems that produce line-level rationales for every code assigned allow coders and physicians to verify, correct, or accept suggestions with full transparency.

This allows reviewers to confirm correct codes quickly, catch wrong ones before submission, and defend every documented decision if a claim is audited. It also creates a continuous feedback loop, when a physician overrides a suggestion, that correction informs future accuracy.

Also Read: Clinical Documentation Compliance 2026: Requirements and AI Impact

These eight capabilities work together to produce documentation that is faster and more accurate. But only when the system is set up with the right integrations and workflows.

Where Ambient Clinical Documentation Still Falls Short On Accuracy

Here's where most vendor-driven content goes quiet. Ambient documentation has real limitations. And understanding them is essential before building a documentation strategy around them.

- Ambient-only notes miss historical patient context: A 2025 study analyzing 354 primary care encounters found that notes generated from ambient audio alone scored a completeness of 40.4 out of 100. But, when combined with longitudinal patient history from EHR and HIE data, that score jumped to 82.9. So, Ambient, on its own, can only capture what's said out loud.

- Specialty accuracy gaps: General medicine performs well at 95%–98% accuracy. But in neurology, psychiatry, and rheumatology, accuracy drops by 21%–42%. These specialties rely on nuanced, subjective language, mood episodes, pain quality, and cognitive symptoms. Current models struggle to interpret consistently.

- Hallucinations and fabricated details: AI-generated notes can produce 'certainty illusions'. These are confident-sounding statements that weren't said, or that misrepresent what was said. A recent scoping review flagged this as a meaningful risk in clinical AI documentation tools. Without physician review, these errors enter the permanent record.

- Free-text interoperability problems: Most ambient scribes output narrative prose. But FHIR-based systems, registries, and analytics platforms require coded, structured data. Free text that isn't mapped to structured fields creates downstream fragmentation.

So, ambient clinical documentation accuracy alone isn't enough, and any practice that treats it as a set-and-forget solution will find that out quickly.

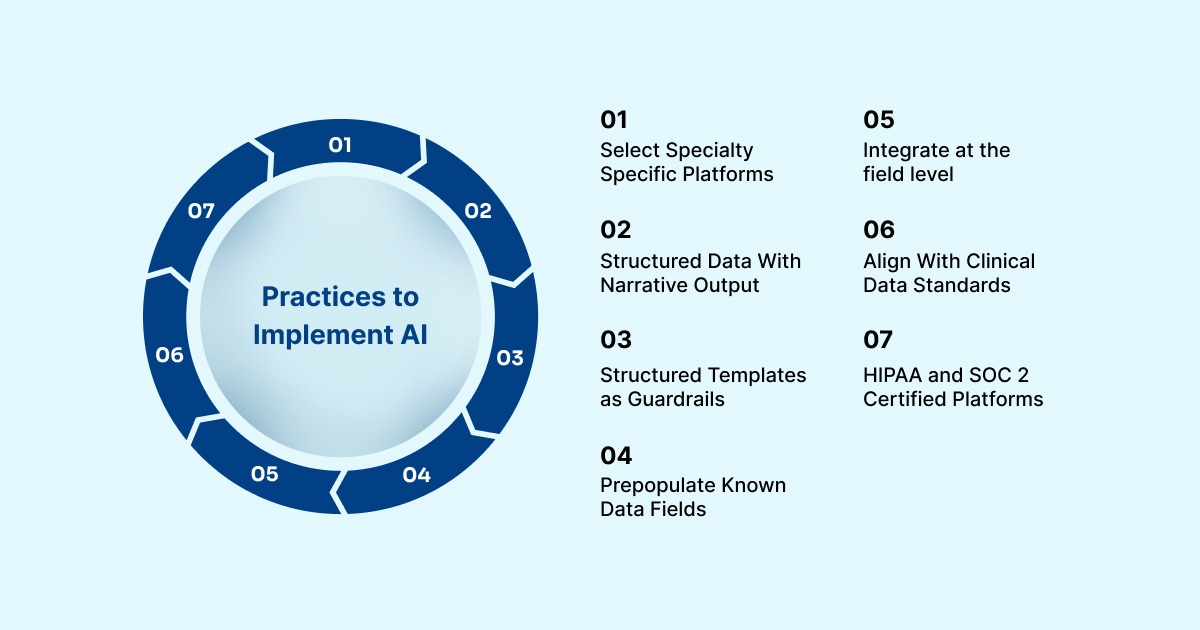

7 Best Practices to Implement AI For Better Ambient Clinical Documentation Accuracy

Getting clinical documentation accuracy right depends on how that tool is deployed, integrated, and governed. Here are the practices that separate effective implementations from disappointing ones:

1. Choose platforms with specialty-specific models: A model trained on general medicine performs differently from one trained on nephrology or psychiatry. Before deployment, confirm the platform has been validated against the note types and terminology specific to your specialty. Generic accuracy claims don't translate uniformly across clinical contexts.

2. Require structured data output alongside narrative: A note that reads well but doesn't populate structured EHR fields is only half useful. Insist on platforms that map documented content to discrete fields, diagnoses, medications, and problem lists. This is what makes ambient clinical documentation integration with your EHR actually functional.

3. Use structured templates as a quality guardrail: Templates with required fields prevent omissions. Controlled vocabularies eliminate the inconsistency that free-text introduces. Combining ambient voice capture with SNOMED-coded clinical forms keeps the note human-readable while the underlying data becomes structured and machine-processable.

4. Pre-populate known data to reduce re-entry errors: Medications, allergies, and prior diagnoses already exist in the EHR. You should pull from that record, not re-state. Pre-population reduces both the time burden and the risk of conflicting or duplicate entries.

5. Integrate at the field level, not just as an attachment: Native EHR embedding, where AI-generated content populates specific structured fields directly, is fundamentally different from copying a generated note into a text box. Real-time sync also eliminates transcription lag, which can introduce errors when notes are queued for later processing. This is the foundation of sound ambient clinical documentation integration.

6. Align with FHIR, SNOMED CT, and LOINC standards: If AI output doesn't align with interoperability standards, it creates isolated documentation that can't be exchanged, analyzed, or used for quality reporting. These standards are the infrastructure of ambient clinical documentation compliance, and any platform you deploy should be built around them.

7. Prioritize platforms with HIPAA compliance and SOC 2 certification: Patient conversations are among the most sensitive data in healthcare. Platforms must demonstrate encryption at rest and in transit, role-based access controls, and formal compliance certifications. This is non-negotiable under ambient clinical documentation compliance requirements.

So, ambient clinical documentation accuracy is most effective when it's not a standalone transcription tool but an integrated layer within a broader documentation and coding workflow.

How RapidClaims Bridges the Accuracy Gap

Even with strong ambient AI at the front end, documentation errors still reach the billing layer. Codes are missed. Claims are submitted with insufficient specificity. Denials follow, and each one costs time, staff effort, and revenue.

RapidClaims is an AI-powered revenue cycle intelligence platform built specifically to fix the accuracy gaps that ambient documentation leaves behind. It helps you turn incomplete or error-prone clinical records into clean, compliant, reimbursable claims.

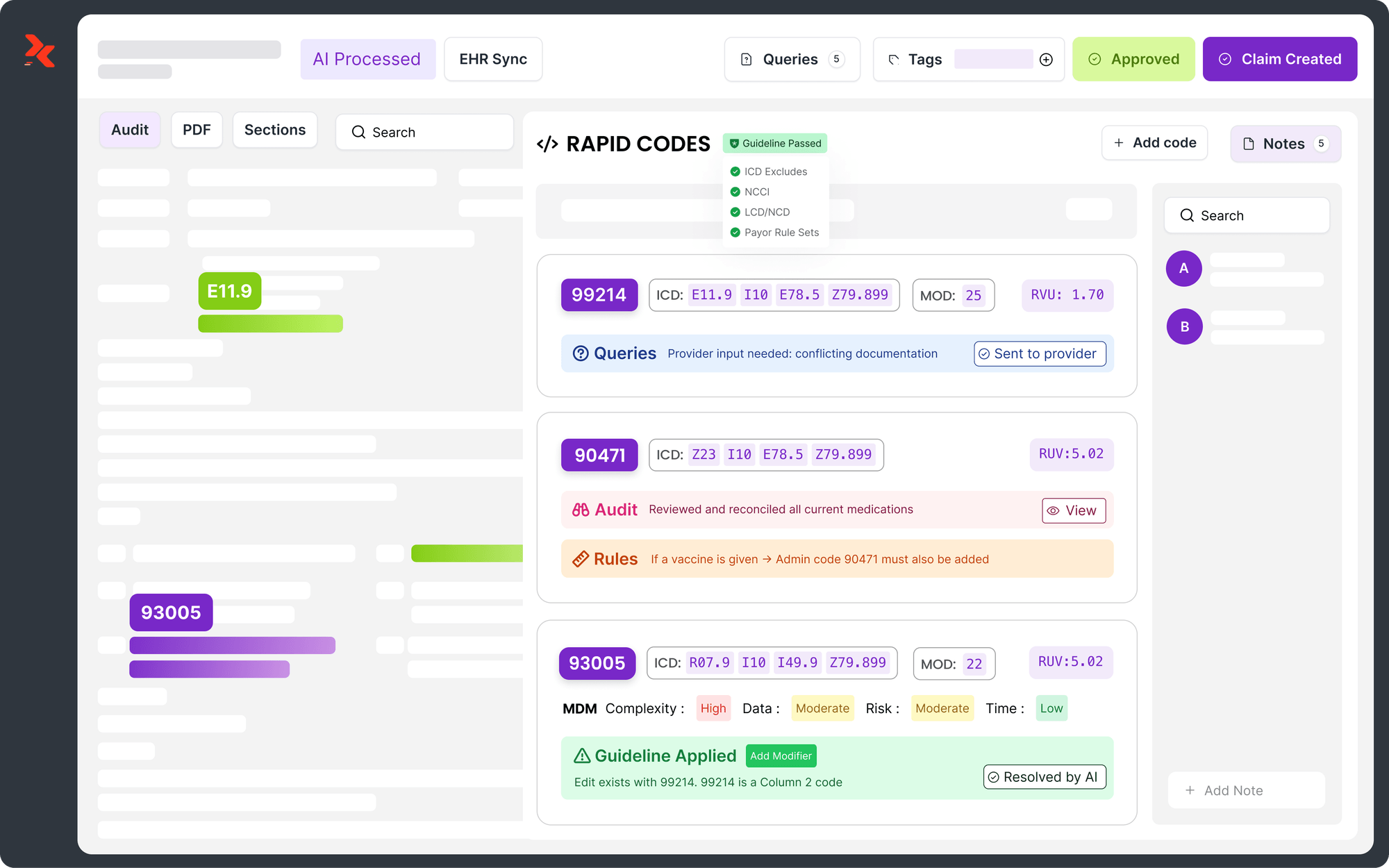

Here is how each module addresses a specific accuracy failure point:

- RapidCode™, fixing coding errors before they become denials: It runs autonomous ICD-10, CPT, and E/M coding at 1,000+ charts per minute, applying 100% guideline coverage across 36+ specialties. It processes what the note says and assigns the most defensible, accurate code, with a line-level rationale attached so physicians and coders can verify every decision.

- RapidCDI™, catching what ambient scribes miss before the note is signed: It works at the point of care, pulling from longitudinal patient history to surface HCC suspicions and RAF delta before the physician signs off. Physicians get a one-click prompt to confirm or query the diagnosis. This produces a 25% average RAF accuracy lift and saves approximately 30 minutes of MD chart review time per day.

- RapidScrub™, stopping inaccurate claims from reaching payers: It applies 119 million machine-learned smart edits, refreshed daily from payer bulletins, to every claim before submission. It • flags high-risk claims for human review while low-risk claims move forward automatically. This reduces denials by 70% and accelerates A/R recovery by five days.

- RapidRecovery™, recovering revenue from claims that slipped through: The system drafts payer-specific appeal letters, tracks submission and response, and feeds the outcome data back into RapidScrub™ so the same denial pattern doesn't repeat. It's the closed-loop piece that converts lost revenue into a learning mechanism.

RapidClaims integrates with Epic, Cerner, Athena, and eClinicalWorks via SMART-on-FHIR and HL7. Most practices go live within 30 days with no disruption to existing workflows.

Conclusion

Ambient clinical documentation accuracy is improving, but it isn't a solved problem. AI has made real strides in NLP, coding automation, and structured note generation. At the same time, ambient-only capture misses critical historical context, struggles in complex specialties, and still requires physician review to be clinically and legally defensible.

RapidClaims addresses the billing accuracy layer directly, where documentation gaps translate into denied claims and lost revenue. With 96%+ coding accuracy, 70% denial reduction, a 30-day go-live timeline, and success-based pricing on recovery services, it's built for practices that need measurable results without overhauling their existing workflows.

If improving ambient clinical documentation accuracy and reducing claim denials is a priority for your organization in 2026, request a personalized demo today.

FAQs

1. What's the difference between ambient clinical documentation accuracy and transcription accuracy?

Transcription accuracy measures how correctly speech is converted to text. Clinical documentation accuracy measures whether the resulting note is clinically complete, correctly coded, and suitable for billing and care decisions. A system can achieve high transcription accuracy but still produce a note that misses critical diagnoses, uses incorrect code specificity, or lacks the historical context required for audit-ready documentation.

2. Can ambient clinical documentation tools work across multiple languages?

Some platforms are beginning to offer multilingual support, but coverage varies significantly by vendor. Most current ambient AI systems are optimized for English, with Spanish support emerging in select platforms. For practices serving diverse patient populations, language support should be a direct evaluation criterion.

3. How does ambient clinical documentation affect HIPAA compliance obligations?

Any technology that records patient-provider conversations is handling protected health information (PHI). HIPAA obligations apply fully, including business associate agreements (BAAs) with the vendor, data encryption in transit and at rest, and access controls. Practices should confirm that the ambient platform does not retain patient recordings after note generation and operates on HIPAA-certified infrastructure.

4. Does ambient AI work for telehealth encounters the same way it works in-person?

Most ambient documentation platforms support telehealth encounters, but audio quality and diarization accuracy can differ from in-person visits due to compression artifacts, background noise, and connectivity issues. Practices running significant telehealth volume should request telehealth-specific accuracy benchmarks from vendors before deployment.

5. How long does it typically take to see measurable accuracy improvements after implementing ambient AI?

Most organizations begin seeing measurable gains in chart closure rates, coding accuracy, and denial rates within 30 to 60 days of implementation, assuming proper EHR integration and staff onboarding. Platforms that retrain on payer feedback continuously, like RapidClaims, show compounding improvement over 3–6 months as the system learns specialty-specific and payer-specific patterns.

Rejones Patta

Rejones Patta is a knowledgeable medical coder with 4 years of experience in E/M Outpatient and ED Facility coding, committed to accurate charge capture, compliance adherence, and improved reimbursement efficiency at RapidClaims.

Latest Post

expert insights with our carefully curated weekly updates

Related Post

Top Products

%201.png)