AI in Medicine: A 2026 Guide for U.S. Healthcare Operations

AI in medicine is no longer just a theory. It is now essential for U.S. healthcare groups facing high pressure to improve coding, cut claim denials, and boost revenue in a tough regulatory and payer setting.

Hospitals and health systems now report that 80% use AI to improve operational efficiency and patient care workflows, including administrative processes such as billing and documentation. Meanwhile, manual coding and billing remain costly and error-prone.

Traditional automation tools such as computer-assisted coding (CAC) and rules-based scrubbers are hitting performance ceilings. Whereas modern applications of artificial intelligence in medicine, particularly those that use ML, are moving beyond pilot phases into full production.

This shift is especially material in U.S. revenue cycle operations, where payer policies, documentation quality, and coding accuracy directly impact cash flow.

This guide focuses on how healthcare leaders should think about AI in medicine from an operational standpoint, not as an abstract technological trend. Learn real applications, relevant compliance factors, technical foundations, and the practical benefits that AI delivers across modern revenue cycle workflows.

Key Takeaways

- AI in medicine delivers real value in revenue cycle operations, not theory. Coding, documentation, denial prevention, and risk adjustment are where AI is already producing measurable impact.

- Legacy CAC and rules engines can't keep up with payer and CMS changes. Learning-based AI is required to reduce rework and prevent denials at scale.

- Explainability is essential for regulated healthcare workflows. AI must show documentation-level evidence and audit trails to be deployable.

- Compliance alignment determines success or risk. Effective AI operates within ICD-10, CPT, HCC (V28), CMS, and HIPAA requirements.

- RapidClaims applies AI across the entire encounter-to-claim lifecycle. Unified coding, CDI, and denial prevention drive faster reimbursement and lower risk.

Table of Contents:

- What AI in Medicine Means for Healthcare Organizations

- Where AI Is Actively Used in Medicine Today

- Why Traditional Systems Fail to Operationalize AI

- How AI Fits into U.S. Healthcare Compliance Frameworks

- Real-World Results: What AI Delivers in Practice

- How Healthcare Leaders Should Evaluate AI Platforms

- Conclusion

- FAQs

What AI in Medicine Means for Healthcare Organizations

In clinical settings, AI in medicine often conjures images of diagnostic algorithms and radiology assistants. While those applications are essential, the term also, and increasingly, refers to systems that interpret unstructured clinical data, automate complex operational tasks, and improve financial performance for healthcare providers.

At an operational level, AI integrates machine learning (ML) and natural language processing (NLP) to interpret clinical and administrative data, identify patterns, and assist decision-making in coded workflows.

These technologies automate what traditionally required human review, reduce variability, and improve consistency:

- Machine Learning (ML) models learn from large amounts of historical billing and clinical data to predict outcomes such as coding accuracy or denial risk.

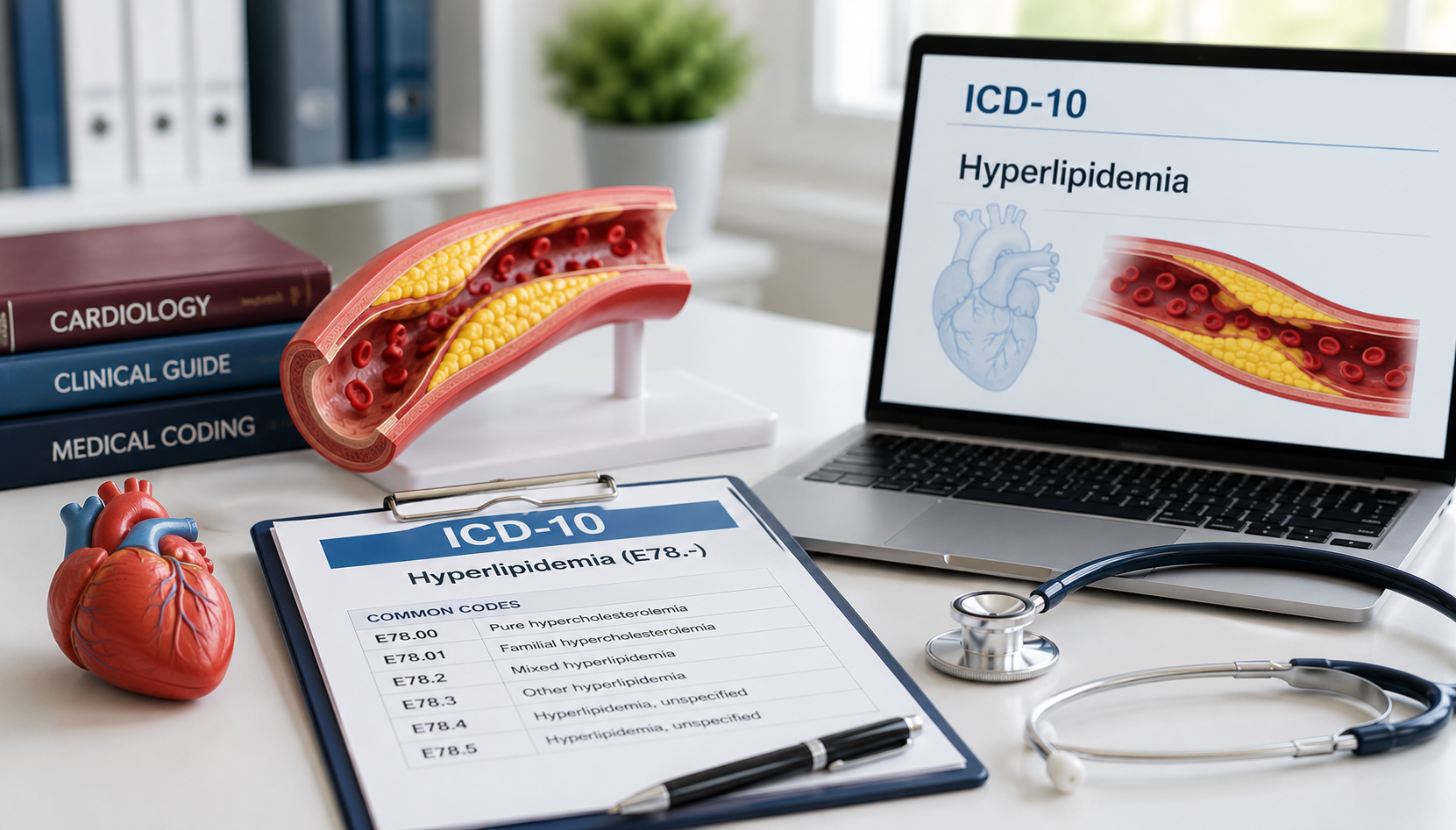

- Natural Language Processing (NLP) extracts meaningful, standardized information from narrative documentation, such as physician notes or discharge summaries. This enables automated ICD-10, CPT, and HCC (Hierarchical Condition Category) coding from free text without manual keying.

Together, ML and NLP are now widely used not just in research and diagnostics, but in mission-critical administrative systems that sit between clinical documentation and payer reimbursement.

This operational definition matters most when applied to real workflows. The next step is seeing where AI is already delivering results in practice.

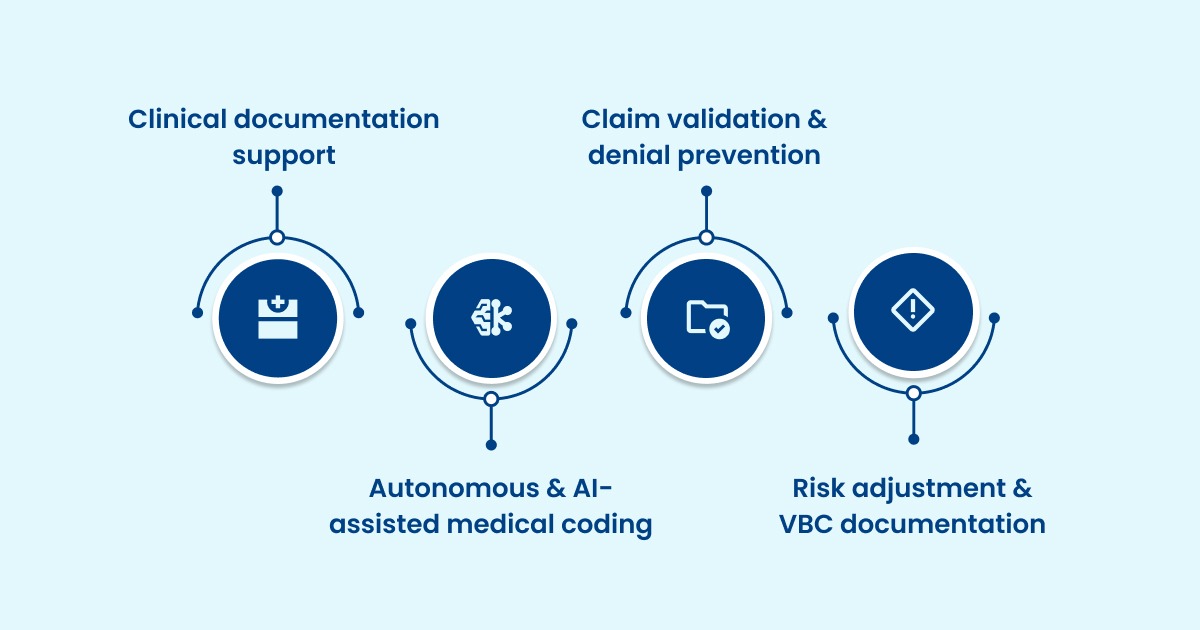

Where AI Is Actively Used in Medicine Today

Most "AI in medicine" journal-style articles describe broad categories such as imaging and drug discovery. That's not where most healthcare operators see near-term ROI. In U.S. provider organizations, medicine and AI intersect most clearly in workflows tied to reimbursement, compliance, and clinician time.

Below are the operational use cases already in production with the strongest evidence of impact.

1. Clinical documentation support

Documentation is now a primary deployment area for medical AI because it targets a measurable bottleneck: time.

- AMA survey data (2024) shows physicians work 57.8 hours/week on average, with only 27.2 hours spent on direct patient care. Physicians also report 13 hours/week on indirect care tasks such as documentation and order entry.

- That gap is why many systems have adopted AI note-drafting tools ("ambient AI" or AI scribes). These tools create structured drafts from encounter conversations, then rely on clinician review.

In real-world rollouts, large-scale adoption is measured in encounter volume rather than in pilots, signaling operational maturity.

2. Autonomous & AI-assisted medical coding

Medical coding is one of the highest-frequency, highest-variance workflows in healthcare operations. Minor errors create downstream denials, appeals, and delayed reimbursement.

Denial data across the system makes the stakes clear.

- Medicare Advantage denial rates have also been quantified in peer-reviewed analyses, with a study reporting 17% of initial claims denied. And a large share was later overturned, suggesting rework and friction rather than clinical appropriateness.

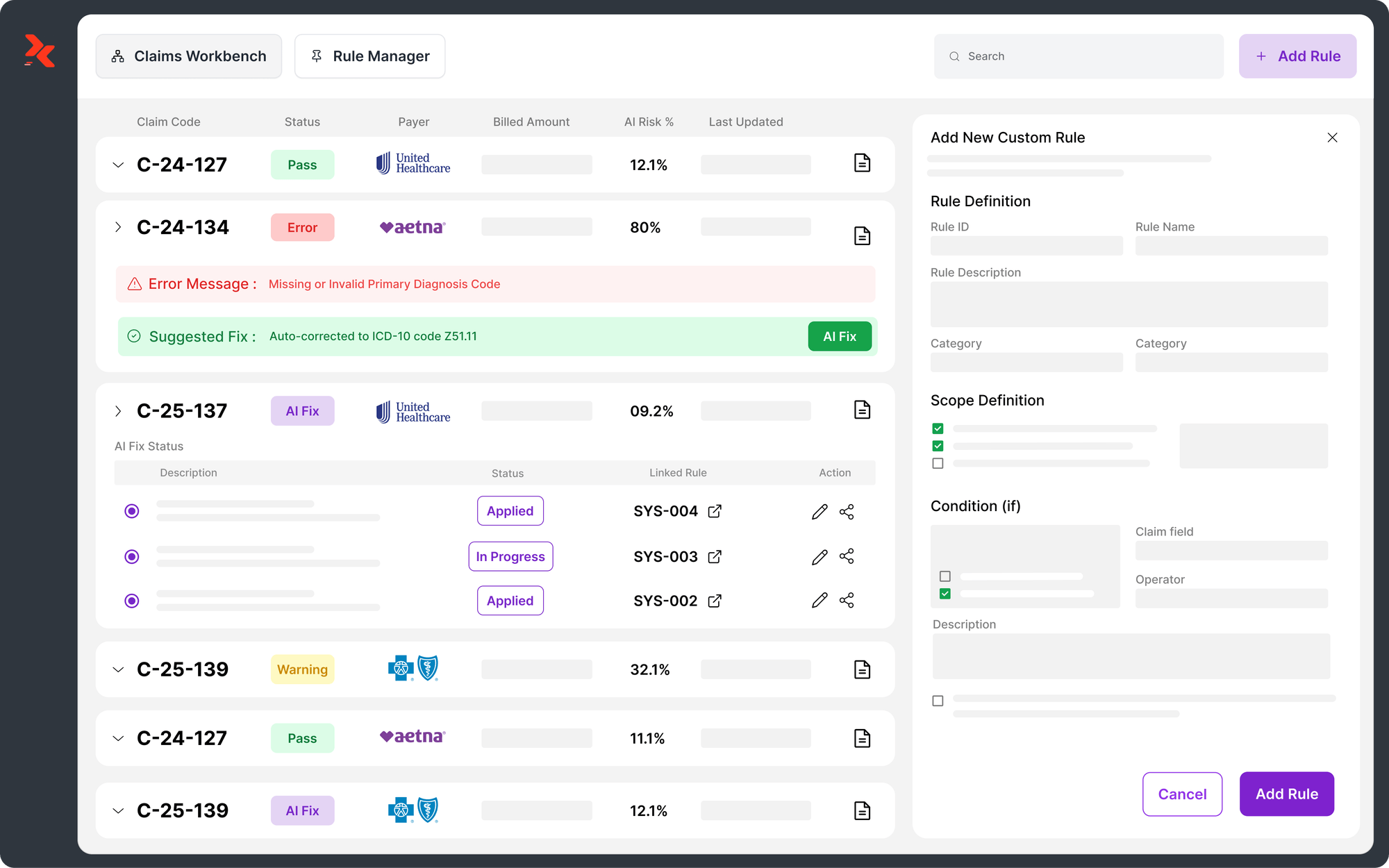

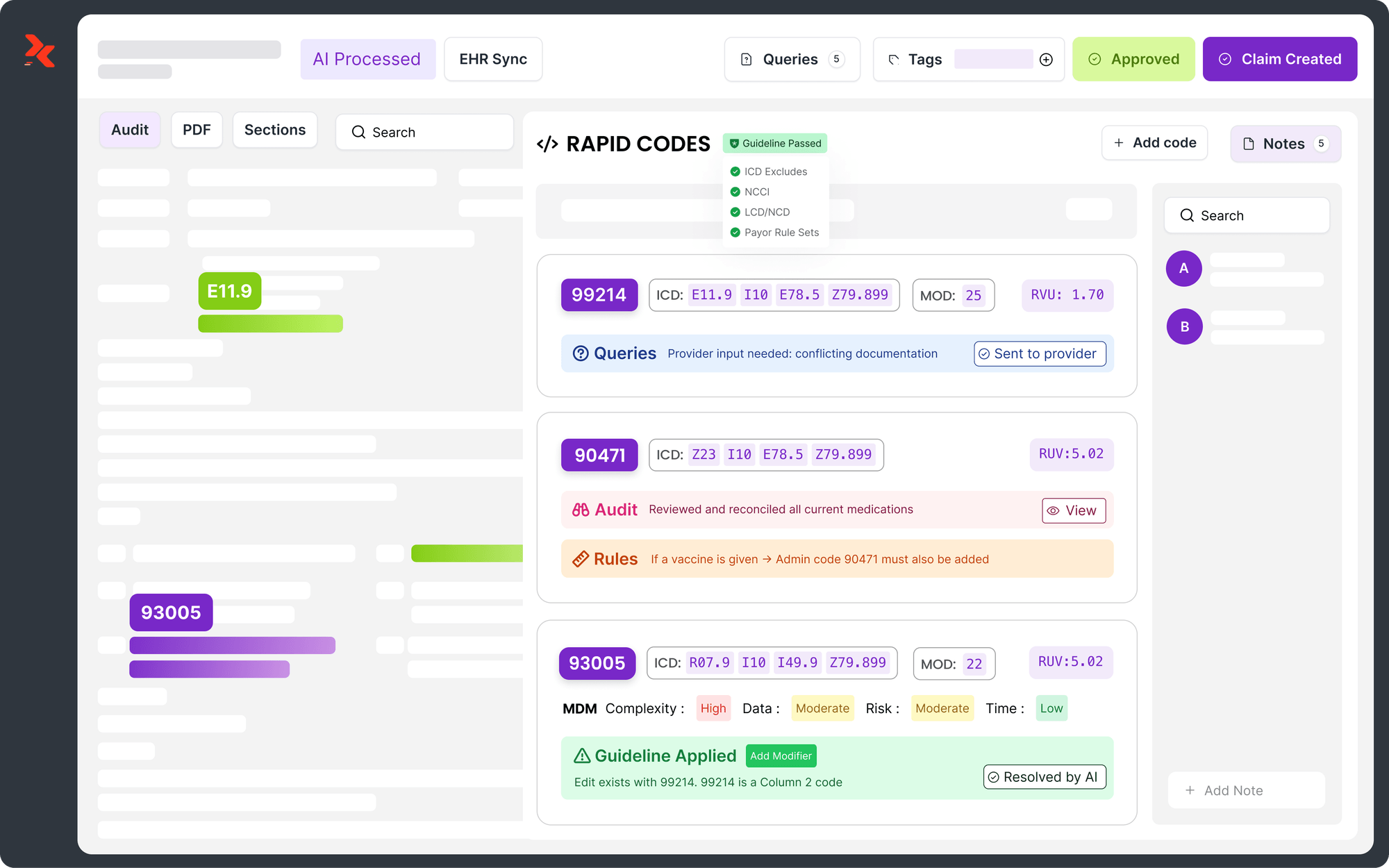

- AI-driven coding tools use NLP to read documentation and propose ICD-10/CPT/HCPCS codes, often paired with rule validation to check coding guidelines and payer edits.

The operational value comes from two outcomes that legacy CAC tools struggle to deliver simultaneously: coding speed and audit-ready traceability.

3. Claim validation & denial prevention

Claim denials aren't just a back-end problem. Front-end data quality, eligibility, and authorization issues create avoidable churn.

U.S. healthcare data shows that claim denials remain a significant operational challenge, with federal transparency data indicating that insurers deny around 19–20% of claims on average in ACA marketplace plans.

Modern AI denial prevention systems typically combine:

- Real-time eligibility and authorization checks (front-end prevention)

- Claim "risk scoring" to focus human review on high-likelihood denials

- Continuous updates from payer behavior (because edits and policies change)

4. Risk adjustment & VBC documentation

Risk adjustment has become a moving target as CMS updates models. The operational challenge is not awareness. It's execution across distributed documentation workflows.

CMS is phasing in the updated risk adjustment model over multiple years.

- In the CY 2024 Rate Announcement, CMS describes how to blend risk scores between the current and updated models, with a phased approach that will continue into later years.

- CMS also continued the phase-in approach in the CY 2025 announcement, reinforcing that organizations must operate in a blended-model environment rather than a clean cutover.

This is where AI in medicine becomes practical: it surfaces suspect conditions, prompts documentation before sign-off, and supports compliant querying aligned to CMS/AHIMA expectations.

Why Traditional Systems Fail to Operationalize AI

Most organizations didn't arrive at AI in medicine from a blank slate. They came after years of using computer-assisted coding (CAC), rules-based scrubbers, and bolt-on edits.

Those tools help with basic tasks, but they break down in two places that matter most in U.S. healthcare operations: documentation variability and payer volatility.

Documentation Variability

CAC tools were designed to suggest codes, not to carry end-to-end accountability for accuracy and compliance. That gap shows up in predictable ways.

- CAC-suggested codes can be triggered by words in labs or imaging reports that are not reportable without provider corroboration, especially for inpatient coding.

- And, human verification stays mandatory. Because CAC outputs still require close coder review, many teams see productivity gains plateau once the "easy" charts are handled.

Operationally, this means CAC often reduces keystrokes but doesn't reduce chart touches. Coding managers still carry the compliance risk, and audit readiness depends on manual judgment.

Payer Volatility

Claim scrubbers typically validate claims against a predefined rule set (ICD-10/CPT/HCPCS, NCCI edits, and payer-specific policies).

- That works for basic formatting and known issues, but the model has a structural weakness: rules change more slowly than payers do.

- Many scrubbers are updated periodically by vendors. When payer bulletins, prior authorization rules, or coverage policies shift, teams experience a lag where "clean" claims still deny.

In practice, rules-only prevention becomes a maintenance problem. Teams spend time managing edits rather than reducing denials.

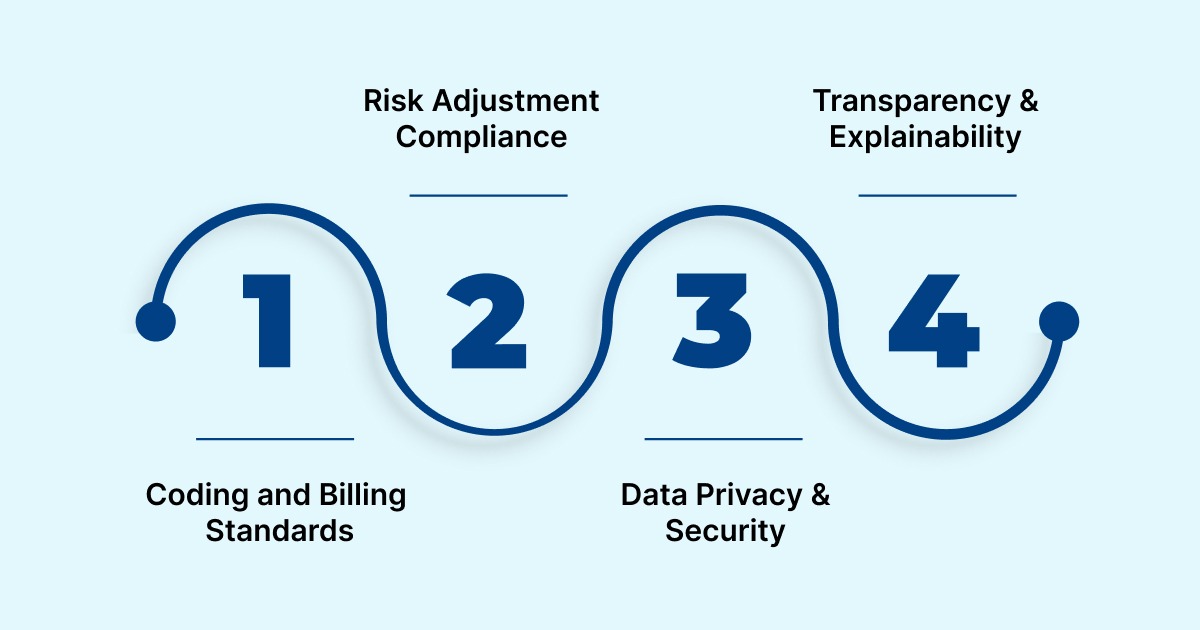

How AI Fits into U.S. Healthcare Compliance Frameworks

For healthcare organizations adopting AI in medicine, compliance shapes whether an AI deployment succeeds or creates risk.

AI systems that interpret clinical data, assign codes, or validate claims must operate within multiple, overlapping regulatory frameworks that directly impact reimbursement and audit readiness.

1. Coding and Billing Standards

AI outputs must align with established coding structures. Inaccurate coding isn't just an operational error but a compliance risk that drives improper payments.

For AI systems to be compliant:

- Every code must tie directly to source documentation.

- Edits must follow NCCI, LCD/NCD, and payer-specific policy logic.

- AI must support revision tracking and audit logs, not just output a suggestion.

This means AI used operationally in U.S. healthcare isn't generating codes in the abstract. It's producing defensible coded claims backed by documented rationale.

2. Risk Adjustment Compliance

Risk adjustment accuracy is a key compliance checkpoint for value-based care populations. The shift to HCC V28 increased emphasis on:

- Condition severity hierarchies

- Multiple body system representations

- Incorporation of SDOH markers

AI systems that integrate HCC logic directly into documentation review help coders and clinicians refine specificity before claim submission - a critical distinction from retrospective chart review.

3. Data Privacy & Security

AI workloads must conform to the same privacy and security requirements as all healthcare information systems.

Key points for compliance:

- Protected health information (PHI) must remain encrypted both in transit and at rest.

- Access must be tied to role-based permissions, not universal AI access.

- Comprehensive audit logs must record data changes and AI suggestions.

HIPAA's Security Rule requires safeguards at administrative, technical, and physical levels. It means AI systems must support fine-grained control, logging, and breach detection, not just accuracy.

4. Transparency & Explainability

Many healthcare compliance officers view "black-box AI" as unacceptable in a field where every claim, code, and documentation choice may be audited.

Explainable AI must provide:

- Line-level rationale for every coded element

- Contextual insights that link code decisions to specific narrative segments

- Exportable audit trails that external auditors or payers can review

These capabilities differentiate operational AI from research-oriented models that optimize accuracy without traceability.

Ultimately, by operating within coding and regulatory frameworks, AI systems become reliable tools rather than liability vectors.

Real-World Results: What AI Delivers in Practice

In U.S. healthcare operations, the value of AI in medicine shows up in a few measurable places: cleaner claims, fewer denials, faster cash, and less time spent reworking documentation and coding.

Here's what "real impact" looks like when AI is embedded across coding, Clinical Documentation Improvement (CDI), and claim validation workflows.

1. Cleaner claims and less denial rework

When AI is used upstream (coding + edit validation), the first metric that moves is clean-claim performance.

RapidClaims reports outcomes such as:

- Clean claim rate improving from 92% to 99%, with a 96% first-pass yield in a multi-specialty physician group context.

- 27% reduction in claim denials and 70% reduction in cost to collect, tied to operational automation and prevention-oriented workflows.

These aren't abstract "AI benefits." They map directly to fewer touches per claim, fewer rebills, and shorter A/R cycles.

2. Coder capacity gains without sacrificing compliance

Operational AI proves itself when it reduces chart touches while keeping outputs defensible.

RapidClaims positions RapidCode around audited coding performance and automation speed, and pairs it with compliance coverage and explainability features (audit trails and rationale).

For coding leaders, this matters because productivity gains that aren't audit-ready often get clawed back later through QA rework and external review.

3. More complete documentation & improved risk capture

On the CDI and VBC side, impact is typically measured by the number of new conditions captured and RAF/quality performance.

RapidClaims highlights CDI outcomes, including:

- 45% new conditions identified (an indicator of missed specificity and under-capture in baseline workflows).

- RapidCDI's emphasis on point-of-care capture and fast value realization (weeks, not months) is critical during ongoing CMS model changes and blended risk-score periods.

These outcomes set a clear benchmark for evaluating AI platforms and expose which solutions can actually scale.

How Healthcare Leaders Should Evaluate AI Platforms

In 2026, the “AI in medicine” decision is primarily an operations decision: will this system reduce denials, speed reimbursement, and stay audit-ready without adding work? A practical evaluation should focus on four things.

1) Prove it can reduce denials, not just generate suggestions

As we already saw, denials remain a structural problem. So the test isn't "Does the AI find issues?" It's: Does it prevent denials before claims go out, and learn from remits, dnd, and status responses?

Vendor questions:

- How is denial risk scored, and what inputs drive the score?

- How often are payer edits updated, and where does that update data come from?

- Can the system show which payer rule or pattern triggered an edit?

2) Check auditability against CMS error patterns

CMS continues to flag insufficient documentation as a key root cause in improper payments. This makes explainability non-negotiable for coding, CDI prompts, and claim edits.

Vendor questions:

- Can each code or edit be tied to specific documentation (line-level evidence)?

- Can audit trails be exported for internal QA and payer review?

- Are model/rule changes versioned so teams can explain “what changed” over time?

3) Validate integration readiness with real constraints

AI projects often stall on data flow, not models. In a 2025 Healthcare IT News report, 47% of healthcare leaders cited data quality and integration as major barriers to AI, and 39% cited regulatory/privacy concerns.

Vendor questions:

- Which EHR integration methods are supported (SMART on FHIR, HL7)?

- Which claim transactions are supported?

- What data is required on day one, and what is optional?

4) Demand fast, measurable time-to-value

A platform should show impact quickly on a small, controlled scope (specific specialties, payers, or claim types) with shared metrics.

KPIs to require in the first 30–60 days:

- First-pass clean claim rate

- Denial rate by payer/service line

- Cost-to-code or charts per coder per day

- CDI query acceptance rate and time saved

If a vendor can't commit to these operational measures early, the project risks becoming another "pilot that never scales."

Ultimately, the decision to adopt AI in medicine comes down to operational fit, risk reduction, and measurable return.

Conclusion

By 2026, AI in medicine will no longer be about experimentation or future promise. It will become core operational infrastructure for U.S. healthcare organizations that need to code faster, document more accurately, and reduce the risk of denial under increasing regulatory pressure.

The strongest results come when AI is embedded directly into documentation, coding, and claims workflows - not layered on as a disconnected tool.

If your team is evaluating how AI can strengthen coding accuracy, reduce denials, and deliver measurable revenue cycle gains, explore how RapidClaims applies AI across the full encounter-to-claim lifecycle.

Request a demo and get a focused walkthrough to help assess where automation delivers the fastest, lowest-risk impact for your operations.

FAQs

Q. How is AI in medicine different from traditional healthcare automation?

A. Traditional automation follows fixed rules. AI in medicine uses machine learning and NLP to interpret clinical context, adapt to payer behavior, and improve accuracy over time, especially in coding, CDI, and denial prevention.

Q. Can AI in medicine actually reduce claim denials, or does it flag issues?

A. When deployed upstream, AI can prevent denials by validating documentation, eligibility, and payer rules before submission. Systems that learn from remittance data are more effective than tools that only flag errors after the fact.

Q. Is AI in medicine safe to use for regulated workflows like coding and billing?

A. Yes, if the AI is explainable and compliance-aware. Audit trails, documentation-to-code traceability, and alignment with CMS, ICD-10, CPT, and HCC rules are critical for safe operational use.

Q. How does AI in medicine support both fee-for-service and value-based care models

A. AI improves FFS outcomes by increasing clean-claim rates and reducing denials, while supporting VBC by improving documentation specificity, HCC capture, and risk adjustment accuracy across encounters.

Q. What should healthcare organizations prepare before adopting AI in medicine?

A. Organizations should assess data integration readiness, define success metrics (denials, cost-to-code, RAF accuracy), and involve compliance and IT teams early to ensure AI fits existing workflows and governance standards.

Muyied Ulla Baig

Muyied Ulla Baig is a dedicated medical coder with 1 year of experience in E/M Outpatient, HCC, and Dental coding, supporting accurate risk adjustment and claims integrity through detailed and compliant coding processes at RapidClaims.

Latest Post

expert insights with our carefully curated weekly updates

Related Post

Top Products

%201.png)